A number of social media networks have denied their platforms are addictive during their appearances before an Oireachtas committee.

Executives from companies, including Meta, TikTok and Snapchat, faced questions from TDs and senators about harmful algorithms and inappropriate content.

Representatives from TikTok were asked by Sinn Féin TD Claire Kerrane whether they would accept that the platform can be addictive.

“We wouldn’t agree with the term addictive but that doesn’t mean we don’t take the wellbeing of children incredibly seriously,” TikTok’s Minor Safety Public Policy Lead Richard Collard responded.

“When we look at TikTok, and for instance the recommender algorithm, for us that is about making sure that on a platform with 100 million pieces of content uploaded every day, that is content that is relevant to users of our platform,” he said.

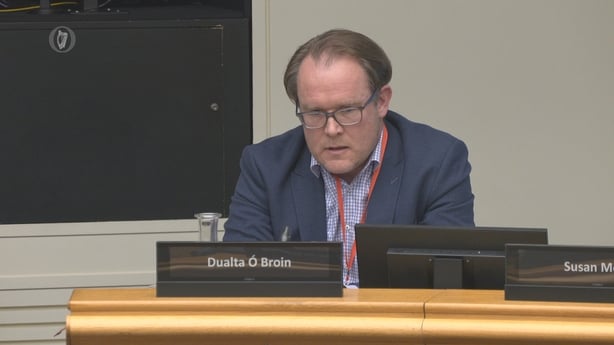

Fine Gael TD Grace Boland asked Director of Public Policy for Meta in Ireland Dualta Ó Broin about content relating to suicide and eating disorders.

“They are not allowed,” Mr Ó Broin replied.

“What verification do you do to make sure no content is slipping through?” Ms Boland asked.

Mr Ó Broin said: “We can’t obviously say that absolutely no content will slip through and we have very strict enforcement in relation to proactive enforcement and reporting.

“In terms of what is allowed to be recommended, that type of content is not allowed.”

Fine Gael TD Emer Currie asked executives from Microsoft if Xbox games were designed to be addictive.

Director of Public Policy and Digital Safety Liz Thomas responded by pointing to the parental controls and safety features that are in place.

“We would say that this is part of a holistic experience and we wouldn’t term any of it as ‘addictive mechanics'”.

“It’s part of a whole approach to gameplay and thinking about what the opportunities are to make that interesting for players,” she added.

Snapchat was asked by members of the Oireachtas Committee on Children and Equality why messages on the platform disappear, amid concerns around cyberbullying and claims that it makes it harder for parents to monitor what their children are doing on the app.

Public Policy Manager for the UK and Ireland Freddie Cook said the messages disappear by default and users can change that setting if they want, to an hour or a few days.

“The reason the messages disappear is because we want Snap to replicate day-to-day conversations,” Ms Cook said.

“So if you were at school or at work there wouldn’t be a written record of what had been shared or exchanged,” she added.

We need your consent to load this rte-player contentWe use rte-player to manage extra content that can set cookies on your device and collect data about your activity. Please review their details and accept them to load the content.Manage Preferences

Earlier, Mr Ó Broin told the committee that Meta has “fundamentally changed” how teenagers use Instagram and Facebook.

He said the company is “constantly” innovating in response to risks to underage users.

“We fundamentally changed the approach for how teens use our services,” he said.

Mr Ó Broin said this includes the use of “teen accounts” that restrict what type of content appears on a feed and additional steps for parents for limiting material further.

Earlier this week, media regulator Coimisiún na Meán launched investigations into Meta over recommender systems that promote content on Facebook and Instagram.

Last month, the European Commission said that Instagram and Facebook, which are owned by Meta, were in breach of the EU Digital Services Act for “failing to diligently identify, assess and mitigate the risks of minors under 13 years old accessing their services”.

Following reviews by the commission’s platform supervision team, concerns arose in relation to potential “dark patterns”, or manipulative and deceptive interface designs, that may prevent people from exercising their right to choose a recommender system feed that is not based on profiling.

A recommender system feed is a style of curation where content is not shown chronologically, but is instead curated using profile data such as past activity, including watch time, time of day, location and behaviour of similar users.

Profiling is the use of automated systems to personalise content or ads based on patterns in a person’s data or behaviour.

Read more:

Media regulator to probe Meta over recommender systems

Meta criticsed over measures aimed at under-13s

Meta expands AI age technology to EU

Earlier this week, Meta announced that it was strengthening “underage enforcement measures” by using AI to remove people under 13 from its services.

Mr Ó Broin said the company’s services are subject to regulation by multiple regulators to meet requirements in relation to the Digital Services Act (DSA), including risks to underage users.

If a platform is found in violation of the DSA, Coimisiún na Meán can apply an administrative financial sanction, including a fine of up to 6% of turnover.

Additional reporting PA